I first tried programming in high school around 2008. I had my own desktop computer and installed Ubuntu to tinker with it. Controlling the machine from the shell felt fresh and fun.

Working via the shell makes automation easy, and I thought mastering Linux commands would let me truly use a computer well. My “programming” began with simple shell scripts strung together.

Later I wanted to learn real programming beyond shell scripts. The internet said “learn C first,” so I bought a beginner‑friendly C book titled “Shirouto Kuma‑kun’s C Class” (Japanese).

I “felt like I got it,” but looking back I probably would’ve struggled even with FizzBuzz.

Next I picked up a Windows game‑programming book.

I wrote a simple side‑scrolling action game in C, mostly by copying from the book. It ran, but I can’t say I truly understood it.

Heading into university, I was torn between studying information science or electrical/electronic engineering. I ended up at the University of Electro‑Communications in the Information and Communication Engineering course, where I could learn both.

In my first year there was a C programming class. I had forgotten much of what I learned in high school, so I started over. There were students who finished assignments incredibly fast—it was eye‑opening. Also, Emacs was required for programming in class, and I became a keen Emacs user.

I wanted to get better and started learning Python. It’s the most popular language now, but back then it wasn’t common in Japan. That rarity actually attracted me.

I remember reading “Minna no Python” as my first Python book and finally being able to write something practical.

During undergrad I didn’t work part‑time or hang out much; I mostly stayed home coding. Living alone, I sometimes wouldn’t see anyone for days and wondered if I was okay with my future.

After getting comfortable with Python, I wanted low‑level knowledge and read books in that area. One that stuck with me was “Hacking: The Art of Exploitation.” Although it’s mainly about security, it finally made C click for me.

I also studied assembly language.

I read many other books, but I wasn’t interested in application development at the time, so not many were practical.

I also didn’t plan to get a programming job—coding was just a hobby—so practicality wasn’t top of mind.

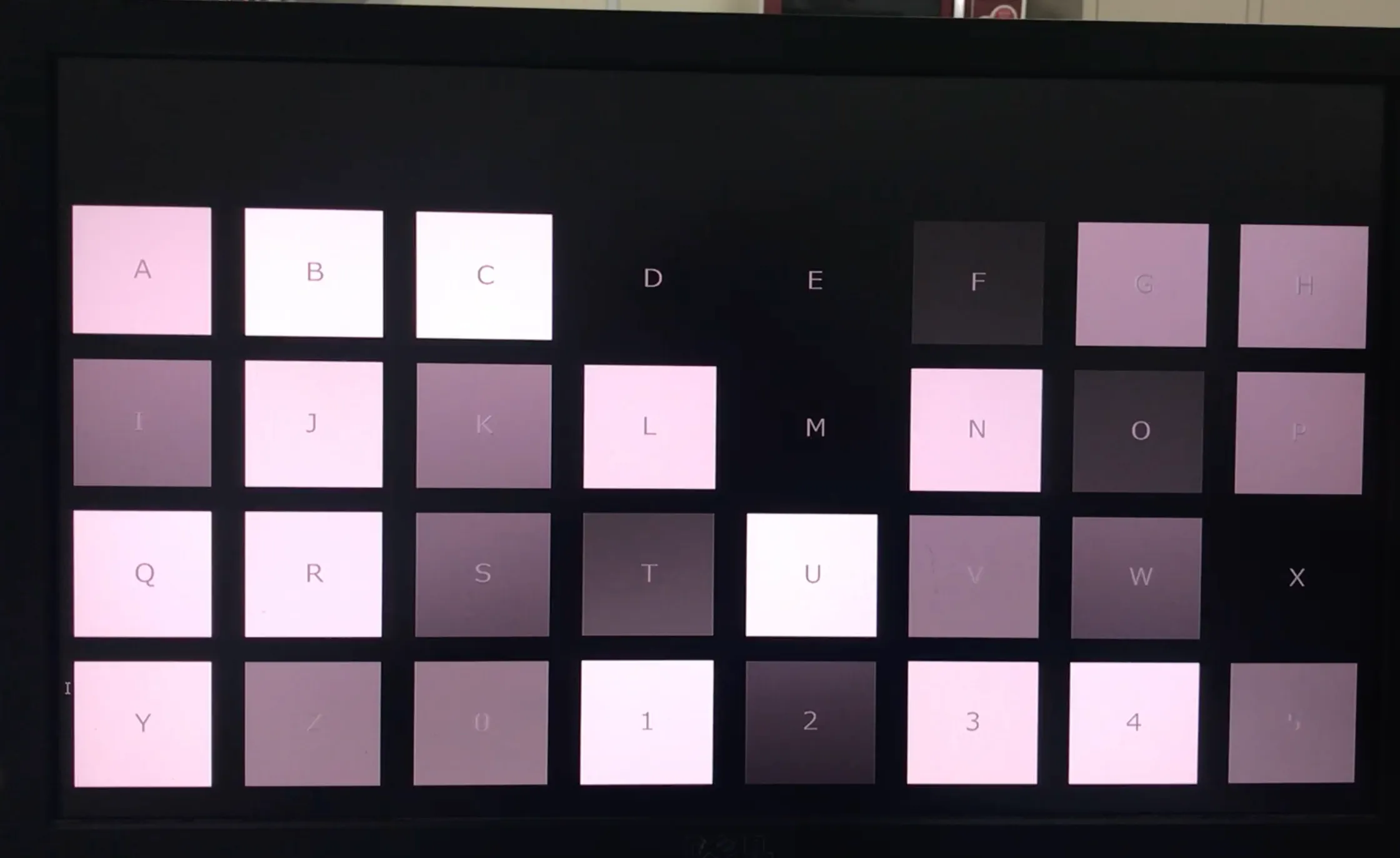

In my fourth year I joined a Brain‑Computer Interface (BCI) lab and mainly programmed with MATLAB and Octave. For experiments using visually evoked potentials, I wrote programs to present visual stimuli on a PC with PsychoToolbox.

Python was my strongest language then, so I pushed to build experimental apps in Python. I controlled the EEG device’s DLL via ctypes, and a mistake could blue‑screen the PC—rough times.

Eventually the experiment app settled on MATLAB, and I used Python for analysis and visualization.

Around then, machine learning was gaining attention. I learned with scikit‑learn and, in a seminar, worked through Bishop’s yellow book “Pattern Recognition and Machine Learning.”

Later I studied data analysis with pandas, which I still use now and then.

In my first year of the master’s program, I started programming as a job through part‑time work and internships—improving search and building ranking models. Large language models didn’t exist yet, so it was fun trying various feature‑engineering ideas to move metrics.

Doing near‑production work made me realize I’d accumulated a decent skill set.

My research was going okay too, and I enjoyed the deep‑dive nature of research, so I considered a PhD. But internships were very close to real‑world development, and I felt I’d enjoy it as a job.

And that’s how I ended up becoming a professional programmer.